|

|

Developmental Biology - Visual Neurons

Sight Doesn't Work The Way We Thought

Specific neurons were thought to capture images in defined brain areas...

A new survey of the activity of nearly 60,000 neurons in the mouse visual system reveals how much we still have to go to understand how the brain computes.

Published in the journal Nature Neuroscience, an analysis led by Christof Koch PhD, Chief Scientist and President of the Allen Institute for Brain Science and co-author with R. Clay Reid MD, PhD and Senior Investigator, reveals more than 90% of neurons in our visual cortex, don't work the way we thought. Nor is it clear just how they do work.

"We thought there were simple principles according to which neurons process visual information. And those principles are now in all the textbooks. But now that we can survey tens of thousands of cells at once, we get a more subtle, and much more complicated picture."

Christof Koch PhD, Chief Scientist and President, the Allen Institute for Brain Science.

Nearly 60 years ago, neuroscientists David Hubel and Torsten Wiesel, made groundbreaking discoveries on how the mammalian brain perceives our visual world. Their work uncovered how individual neurons switch on only in response to very specific types of images.

Hubel and Wiesel made their discoveries in cats and monkeys showing how they see simple pictures - a black bar or dot on a white background, for example. Their general principle says that specific neurons in our brain recognize exact parts of a scene. Over time, that recognition becomes more specialized and fine-tuned in higher-order parts of the brain.

The theory goes: When you look around in a park One set of neurons will fire a rapid electrical response to a dark tree branch on a precise spot in your line of sight. Other neurons turn on only when a bird flies across your field of vision from left to right. Your brain then stitches together information from the "tree branch" neurons and the "moving bird" neurons to get a complete picture of the world around you.

Hubel and Wiesel's findings were recognized by a Nobel Prize in Physiology or Medicine and formed the backbone of the neural networks that underlie most computer vision applications today.

It Wasn't Clear How Incomplete the Story Was

In the past decade, with the advent of new neuroscience methods that enable the study of more and more brain cells at once, scientists have come to understand that this model of how our brains see is likely not the whole story - some neurons clearly don't follow the classic model of tuning into specific features

1950s and 60s neuroscience studies, by necessity, were like fishing expeditions - researchers hunted through the brain with a single electrode until they found a neuron that reliably responded to a certain image. This is akin to trying to watch a widescreen movie through a few scattered pinholes, Koch explains it would be impossible to get a complete picture. In the new study, The Allen Brain Observatory dataset doesn't capture the activity of every neuron under every scenario, but it allows researchers to study more neurons at once, including those with more subtle responses.

Brain Activity Variability

The new study is the first large-scale analysis of the publicly available data from the Allen Brain Observatory, a broad survey that captures the activity of tens of thousands of neurons in the mouse visual system. The researchers analyzed the activity of nearly 60,000 different neurons in the visual parts of the cortex, the outermost shell of the brain, as animals see different simple images, photos and short video clips - including the opening shot from the classic Orson Welles movie "Touch of Evil" (chosen as it has a lot of movement and is a single shot with no cuts).

The researchers' new analysis found that less than 10% of the 60,000 neurons responded following the textbook model. Of the rest, about two-thirds showed some reliable response, but their responses were more specialized than the classic models would predict. The last third of neurons showed some activity, but they didn't light up reliably to any of the stimuli in the experiment - it's not clear what these neurons are doing, the researchers said.

"It's not that the previous studies were all a big mistake, it's just that those cells turn out to be a very small fraction of all neurons in the cortex. It turns out that the mouse visual cortex is much more complex and richer than we previously thought, which underscores the value of doing this type of survey."

Saskia de Vries PhD, Assistant Investigator, the Allen Institute for Brain Science. Leader of the study along with Jérôme Lecoq PhD and Michael Buice PhD.

That these more variable, less specific neurons exist is not news. But it was a surprise that they dominate the visual parts of the mouse brain.

How the Brain Computes

It's not yet clear how these other neurons contribute to processing visual information. Other research groups have seen that locomotion can drive neuron activity in the visual part of the brain, but whether the mice were running or still only explains a small amount of the variability in visual responses, the researchers found.

Their next steps are to run similar experiments with more natural movies, offering the neurons a larger set of visual features to respond to. Buice has made a 10-hour specialized reel of clips from pretty much every nature documentary he could get his hands on.

The researchers also point out that the classic model came from studies of cats and primates, animals which both evolved to see their worlds in sharper focus at the center of their gaze than did mice. It's possible that mouse vision is just a completely different ballgame than ours. But there are still principles from these studies that might apply to our own brains, said Buice, an Associate Investigator at the Allen Institute for Brain Science.

"Our goal was not to study vision; our goal was to study how the cortex computes. We think the cortex has a structure of computation that's universal, similar to the way different types of computers can run the same programs. In the end, it doesn't matter what kind of program the computer is running; we want to understand how it runs programs at all."

Michael A. Buice PhD, Allen Institute for Brain Science, Seattle, Washington, USA.

Abstract

To understand how the brain processes sensory information to guide behavior, we must know how stimulus representations are transformed throughout the visual cortex. Here we report an open, large-scale physiological survey of activity in the awake mouse visual cortex: the Allen Brain Observatory Visual Coding dataset. This publicly available dataset includes the cortical activity of nearly 60,000 neurons from six visual areas, four layers, and 12 transgenic mouse lines in a total of 243 adult mice, in response to a systematic set of visual stimuli. We classify neurons on the basis of joint reliabilities to multiple stimuli and validate this functional classification with models of visual responses. While most classes are characterized by responses to specific subsets of the stimuli, the largest class is not reliably responsive to any of the stimuli and becomes progressively larger in higher visual areas. These classes reveal a functional organization wherein putative dorsal areas show specialization for visual motion signals.

Authors

Saskia E. J. de Vries, Jerome A. Lecoq, Michael A. Buice, Peter A. Groblewski, Gabriel K. Ocker, Michael Oliver, David Feng, Nicholas Cain, Peter Ledochowitsch, Daniel Millman, Kate Roll, Marina Garrett, Tom Keenan, Leonard Kuan, Stefan Mihalas, Shawn Olsen, Carol Thompson, Wayne Wakeman, Jack Waters, Derric Williams, Chris Barber, Nathan Berbesque, Brandon Blanchard, Nicholas Bowles, Shiella D. Caldejon, Linzy Casal, Andrew Cho, Sissy Cross, Chinh Dang, Tim Dolbeare, Melise Edwards, John Galbraith, Nathalie Gaudreault, Terri L. Gilbert, Fiona Griffin, Perry Hargrave, Robert Howard, Lawrence Huang, Sean Jewell, Nika Keller, Ulf Knoblich, Josh D. Larkin, Rachael Larsen, Chris Lau, Eric Lee, Felix Lee, Arielle Leon, Lu Li, Fuhui Long, Jennifer Luviano, Kyla Mace, Thuyanh Nguyen, Jed Perkins, Miranda Robertson, Sam Seid, Eric Shea-Brown, Jianghong Shi, Nathan Sjoquist, Cliff Slaughterbeck, David Sullivan, Ryan Valenza, Casey White, Ali Williford, Daniela M. Witten, Jun Zhuang, Hongkui Zeng, Colin Farrell, Lydia Ng, Amy Bernard, John W. Phillips, R. Clay Reid and Christof Koch.

Acknowledgements

The authors thank the Animal Care, Transgenic Colony Management, and Lab Animal Services for mouse husbandry. We thank Z. J. Huang of Cold Spring Harbor Laboratory for use of the Fezf2-CreER line. We thank D. Denman, J. Siegle, Y. Billeh, and A. Arkhipov for critical feedback on the manuscript. This work was supported by the Allen Institute and in part by NSF DMS-1514743 (E.S.B.), the Falconwood Foundation (C.K.), the Center for Brains, Minds & Machines funded by NSF Science and Technology Center Award CCF-1231216 (C.K.), the Natural Sciences and Engineering Research Council of Canada (S.J.), NIH grant DP5OD009145 (D.W.), NSF CAREER Award DMS-1252624 (D.W.), Simons Investigator Award in Mathematical Modeling of Living Systems (D.W.), and NIH grant 1R01EB026908-01 (M.A.B., D.W.). We thank A. Jones for providing the critical environment that enabled our large-scale team effort. We thank the Allen Institute founder, Paul G. Allen, for his vision, encouragement, and support.

Return to top of page.

| |

|

Jan 2 2019 Fetal Timeline Maternal Timeline News

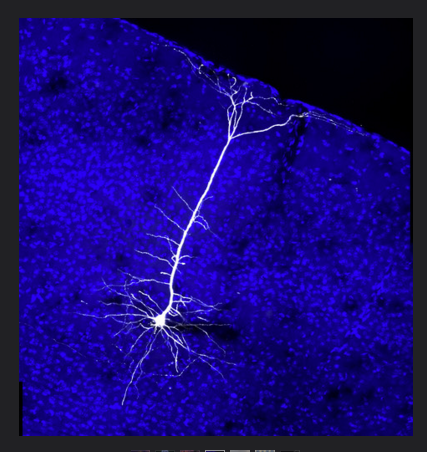

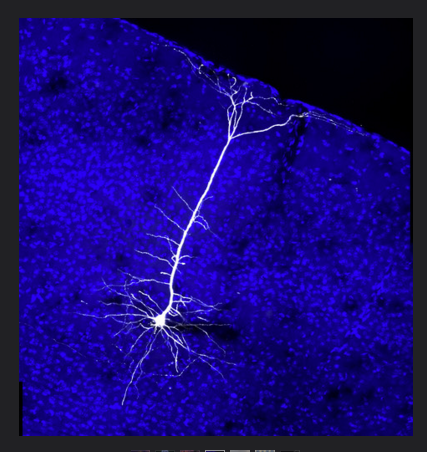

Neuron in Mouse Visual Cortex CREDIT Maximo Scanziani PhD University of California at San Francisco.

|